01. Teaching with AI: https://openai.com/blog/teaching-with-ai

We’re releasing a guide for teachers using ChatGPT in their classroom—including suggested prompts, an explanation of how ChatGPT works and its limitations, the efficacy of AI detectors, and bias.

How teachers are using ChatGPT

- Role playing challenging conversations

- Building quizzes, tests, and lesson plans from curriculum materials

- Reducing friction for non-English speakers

- Teaching students about critical thinking

02. Google Gemini Eats The World – Gemini Smashes GPT-4 By 5X, The GPU-Poors:

https://www.semianalysis.com/p/google-gemini-eats-the-world-gemini

Before Covid, Google released the MEENA model, which for a short period of time, was the best large language model in the world.

GPUs vs TPUs fight.. hmm.. interesting

GPUs and TPUs are both types of hardware accelerators used to speed up machine learning workloads, but there are some key differences between the two:

Architecture: GPUs have a more generalized architecture that can be used for a variety of computational tasks, including machine learning. TPUs, on the other hand, are specifically designed for deep learning, which can lead to better performance for some neural network workloads.

Memory: GPUs typically have larger memory capacities than TPUs, which can be beneficial for certain types of workloads that require large amounts of memory.

Precision: TPUs are designed to perform computations at lower precision than GPUs, which can reduce memory requirements and improve performance for some deep learning workloads.

Price: TPUs are generally more expensive than GPUs, both in terms of the hardware itself and the cloud instances that run them. This can make them less accessible to small-scale machine learning projects or individual developers.

GPUs have a more generalized architecture that can be used for a range of computational tasks, while TPUs are specialized for deep learning workloads and can offer better performance for these tasks

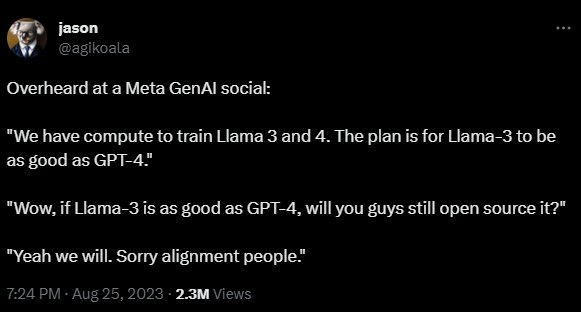

03. Meta plans to take on GPT-4 with a rumored Llama 3, which is still free

https://the-decoder.com/meta-plans-to-take-on-gpt-4-with-a-rumored-llama-3-which-is-still-free

This was overheard by OpenAI engineer Jason Wei, formerly of Google Brain, at a Generative AI Group social event organized by Meta. Wei says he picked up on a conversation that Meta now has enough computing power to train Llama 3 and 4. Llama 3 is planned to reach the performance level of GPT-4, but will remain freely available.

Llama can kill OpenAI ???

GPT-4 has a more sophisticated architecture than your standard Llama

GPT-4 likely achieves its high performance by using a more complex mixture-of-experts architecture with 16 expert networks, each with about 111 billion parameters.

OpenAI engineer Jason Wei reports that he has heard that Meta has enough computing power to train Llama 3 to the level of GPT-4. He also says that training Llama 4 is already possible.

While Wei himself is a credible source, his statements may be wrong, or the plans may change.

04. Tesla’s $300 Million AI Cluster Is Going Live Today: https://www.tomshardware.com/news/teslas-dollar300-million-ai-cluster-is-going-live-today

Tesla is about to flip the switch on its new AI cluster, featuring 10,000 Nvidia H100 compute GPUs.

Tesla is set to launch its highly-anticipated supercomputer on Monday, according to @SawyerMerritt. The machine will be used for various artificial intelligence (AI) applications, but the cluster is so powerful that it could also be used for demanding high-performance computing (HPC) workloads. In fact, the Nvidia H100-based supercomputer will be one of the most powerful machines in the world.

Tesla’s new cluster will employ 10,000 Nvidia H100 compute GPUs, which will offer a peak performance of 340 FP64 PFLOPS for technical computing and 39.58 INT8 ExaFLOPS for AI applications. In fact, Tesla’s 340 FP64 PFLOPS is higher than 304 FP64 PFLOPS offered by Leonardo, the world’s fourth highest-performing supercomputer.

05. OpenAI disputes authors’ claims that every ChatGPT response is a derivative work: https://arstechnica.com/tech-policy/2023/08/openai-disputes-authors-claims-that-every-chatgpt-response-is-a-derivative-work

This week, OpenAI finally responded to a pair of nearly identical class-action lawsuits from book authors—including Sarah Silverman, Paul Tremblay, Mona Awad, Chris Golden, and Richard Kadrey—who earlier this summer alleged that ChatGPT was illegally trained on pirated copies of their books.

OpenAI claimed that the authors «misconceive the scope of copyright, failing to take into account the limitations and exceptions (including fair use) that properly leave room for innovations like the large language models now at the forefront of artificial intelligence.»

06. US Copyright Office wants to hear what people think about AI and copyright: https://www.theverge.com/2023/8/29/23851126/us-copyright-office-ai-public-comments

:format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/16019274/acastro_181017_1777_brain_ai_0003.jpg)

The agency wants to answer three main questions: how AI models should use copyrighted data in training; whether AI-generated material can be copyrighted even without a human involved; and how copyright liability would work with AI. It also wants comments around AI possibly violating publicity rights but noted these are not technically copyright issues. The Copyright Office said if AI does mimic voices, likenesses, or art styles, it may impact state-mandated rules around publicity and unfair competition laws.

Three artists sued generative AI art platforms Stable Diffusion and Midjourney and the art website DeviantArt for allegedly taking their art without their consent and using it to train AI models. Comedian Sarah Silverman and authors Christopher Golden and Richard Kadrey filed their own legal action against OpenAI and Meta for allegedly using their books to help improve ChatGPT and LLaMA.

07. Survey finds relatively few Americans actually use (or fear) ChatGPT: https://techcrunch.com/2023/08/28/survey-finds-relatively-few-americans-actually-use-or-fear-chatgpt

Ongoing polling by Pew Research shows that although ChatGPT is gaining mindshare, only about 18% of Americans have ever actually used it. Of course that changes by demographic: Men, those 18-29 and the college educated are more likely to have used the system, though even among those groups it’s 30-40%. (You can see further breakdowns of this in the chart below.)

Overall, though, only 19% of employed people who’d heard of the model thought it would affect their job in a major way, and 27% expect no impact whatsoever. Interestingly, even fewer people (15%) thought it would be helpful. But people in the “information and technology” sector especially, along with those in education and finance, are much more likely to expect major or minor changes. Few in hospitality, entertainment and hands-on industries like construction and manufacturing reported feeling that way.

In a separate and slightly more recent polling analysis, Pew researchers found that people are much more concerned in general about the role of AI in everyday life: 47%, up from 31% last year, said AI makes them “more concerned than excited.” And the more they know (or think they know) about AI, the more concerned they are.

08. Introducing ChatGPT Enterprise:

https://openai.com/blog/introducing-chatgpt-enterprise

Get enterprise-grade security & privacy and the most powerful version of ChatGPT yet.

..which offers enterprise-grade security and privacy.. and that protects your company data.

Since ChatGPT’s launch just nine months ago, we’ve seen teams adopt it in over 80% of Fortune 500 companies. The 80% statistic refers to the percentage of Fortune 500 companies with registered ChatGPT accounts, as determined by accounts associated with corporate email domains.

We’ve heard from business leaders that they’d like a simple and safe way of deploying it in their organization. Early users of ChatGPT Enterprise—industry leaders like Block, Canva, Carlyle, The Estée Lauder Companies, PwC, and Zapier—are redefining how they operate and are using ChatGPT to craft clearer communications, accelerate coding tasks, rapidly explore answers to complex business questions, assist with creative work, and much more.

You own and control your business data in ChatGPT Enterprise. We do not train on your business data or conversations, and our models don’t learn from your usage.

https://openai.com/enterprise

ChatGPT plans

09. OpenAI shatters revenue expectations, predicted to generate over $1 billion

https://the-decoder.com/openai-shatters-revenue-expectations-predicted-to-generate-over-1-billion

OpenAI is expected to generate more than $1 billion in revenue over the next 12 months, The Information reported, citing an internal source. That figure is well above the estimates OpenAI had previously given investors.

Prior to the launch of ChatGPT, the company projected revenue of just $28 million in 2021. The estimate implies that OpenAI is currently generating more than $80 million in revenue per month.

App developers and companies such as Jane Street, Zoom, Stripe, and Microsoft are already using OpenAI technology.

The trend is toward custom AI assistants for businesses, with Google, Microsoft, and OpenAI in direct competition.

10. Baidu launches Ernie chatbot after Chinese government approval

https://www.theverge.com/2023/8/31/23853878/baidu-launch-ernie-ai-chatbot-china

:format(webp)/cdn.vox-cdn.com/assets/1278785/baidu__1_of_1_.jpg)

Much like its main rival, ChatGPT, users can ask Ernie Bot questions or prompt it to help write market analysis, give marketing slogan ideas, and summarize documents. The company told The Verge Ernie Bot is available globally, but users need a Chinese number to register and log in. The Baidu app is available on US Android and iOS app stores but is only in Chinese.

Company representatives said the Ernie Bot surpassed 1 million users in the first 19 hours since launch.

11. China leaps forward in the A.I. arms race as Alibaba releases a new chatbot that can ‘read’ images

https://finance.yahoo.com/news/china-leaps-forward-arms-race-200540997.html

Alibaba, one of China’s biggest tech companies, announced the release of two new A.I. models on Friday that dramatically level up the possibilities of artificial intelligence.

The open source models, called Qwen-VL and Qwen-VL-Chat, are vision language models, meaning they “read” images rather than text, unlike competitors ChatGPT and Google Bard. Qwen-VL-Chat promises complex features like providing directions by scanning street signs, solving math equations based on a photo, and weaving together a narrative based on multiple pictures. For example, it can scan an image of a sign in a hospital written in Mandarin and then translate it into English, or help a news organization write a caption for a photo.

12. Google Cloud Next event

13. Bringing generative AI in Search to more people around the world

https://blog.google/products/search/google-search-generative-ai-india-japan

Bringing generative AI experience in Search (SGE) to more people, making Search Labs available in India and Japan. We’re also making it even easier to find web pages that support information in AI-powered overviews, and sharing insights on what we’ve learned so far.

Like in the U.S., people in Japan and India will be able to use generative AI capabilities in their local languages, either by typing a query or using voice input. Unique to India, users will also find a language toggle to help multilingual speakers easily switch back and forth between English and Hindi. And Indian users can also listen to the responses, which is a popular preference. In both countries, Search ads will continue to appear in dedicated ad slots throughout the page.

14. Ideogram v0.1 is open to everyone for free

https://ideogram.ai/publicly-available

Ideogram (pronounced eye-diogram) is on a mission to help people become more creative through generative AI. We believe everyone has an innate desire to create and share their creations with each other. Our goal is to make creative expression universally accessible and fun. To this end, we invite dreamers from all backgrounds to join and collectively shape a more creative future. Please share your valuable feedback with us by liking your favorite images.

15. Identifying AI-generated images with SynthID

New tool helps watermark and identify synthetic images created by Imagen

AI-generated images are becoming more popular every day. But how can we better identify them, especially when they look so realistic?

Today, in partnership with Google Cloud, we’re launching a beta version of SynthID, a tool for watermarking and identifying AI-generated images. This technology embeds a digital watermark directly into the pixels of an image, making it imperceptible to the human eye, but detectable for identification.

New type of watermark for AI images

Watermarks are designs that can be layered on images to identify them. From physical imprints on paper to translucent text and symbols seen on digital photos today, they’ve evolved throughout history.

16. New audio transcription tools for Substack

https://on.substack.com/p/transcription#details

We’ve just shipped some new features that make podcasting on Substack even better.

You can now use a special AI tool to create a clean transcript of your podcast episode or narration without having to do anything more than click a button.

The whole transcription process usually takes about a minute.

Once you’ve created the transcript, you can go in and edit it to make it just how you like it, and you can publish it in its own tab on the episode post page.

Then, you can select a passage that you can then use to generate a special audiogram that you can share to social media.

17. META AI: Evaluating the fairness of computer vision models

https://ai.meta.com/blog/dinov2-facet-computer-vision-fairness-evaluation

Expanding DINOv2

We’re excited to announce that DINOv2, a cutting-edge computer vision model trained through self-supervised learning to produce universal features, is now available under the Apache 2.0 license. We’re also releasing a collection of DINOv2-based dense prediction models for semantic image segmentation and monocular depth estimation, giving developers and researchers even greater flexibility to explore its capabilities on downstream tasks. An updated demo is available, allowing users to experience the full potential of DINOv2 and reproduce the qualitative matching results from our paper.

By transitioning to the Apache 2.0 license and sharing a broader set of readily usable models, we aim to foster further innovation and collaboration within the computer vision community, enabling the use of DINOv2 in a wide range of applications, from research to real-world solutions. We look forward to seeing how DINOv2 will continue to drive progress in the AI field.

FACET helps us answer questions like:

- Are models better at classifying people as skateboarders when their perceived gender presentation has more stereotypically male attributes?

- Are open-vocabulary detection models better at detecting backpackers who are perceived to be younger?

- Do standard detection models struggle to detect or segment people whose skin appears darker?

- Are these problems magnified when the person has coily hair compared to straight hair?

18. Samsung launched its AI-powered recipe app called Food

Artificial intelligence might soon decide your dinner plans.

Samsung launched its AI-powered Food app, which will use artificial intelligence to provide users with personalized recipes and food recommendations.

The app, launched in eight languages and 104 countries, boasts 160,000 recipes, Samsung said in a news release on Wednesday. The goals for the app seem to be as lofty as naming the app simply…Food.

The app could, for instance, set timers, pre-heat ovens, or change cooking settings based on the recipe chosen by users.

On face value, there would seem to be some value to the Food app. If you’re not feeling very creative it should, in theory, be able to take the ingredients you have on hand and spit out a recipe. Samsung Food is, after all, based on the popular food-organization-app Whisk, which the company acquired in 2019. With an added AI component, the Food app, in theory, could learn your preferences and modify recipes and shopping lists to fit your needs. The app could, for instance, make a recipe vegan or swap out ingredients for what’s on hand.

19. DoorDash Developing AI-Powered Voice Ordering for Restaurants

20. Uber Eats is reportedly developing an AI chatbot that will offer recommendations, speed up ordering

Uber Eats is developing an AI-powered chatbot that will offer recommendations to users and offer them a quicker way to place orders

The chatbot will ask users about their budget, food preferences and then help them place an order. It’s unknown when Uber plans to launch the chatbot publicly

21. The worlds creation by prompting

https://skybox.blockadelabs.com/

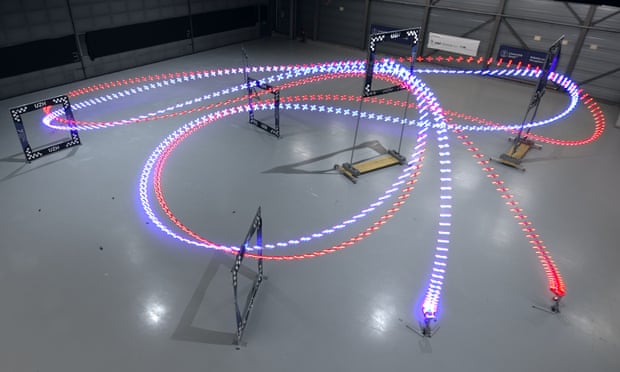

22. AI-powered drone beats human champion pilots

https://www.theguardian.com/technology/2023/aug/30/ai-powered-drone-beats-human-champion-pilots

Swift AI used technique called deep reinforcement learning to win 15 out of 25 races against world champions

Developed by researchers at the University of Zurich, the Swift AI won 15 out of 25 races against world champions and clocked the fastest lap on a course where drones reach speeds of 50mph (80km/h) and endure accelerations up to 5g, enough to make many people black out.